Failure Is Good, Actually

No, this is not going to be some hustleporn screed about failing fast and learning from it. I am talking about actual failure, crashing and burning and flaming out and really really bad outcomes. Here's my point: when these bad things happen to the right people, they can be really good for the rest of us — and not just because we can enjoy the schadenfreude of terrible people messing up in public.

Here's how it works: a terrible person, let's call him Travis (for that is his name) spots an actual gap in the market: hailing taxis sucks, and when you can get one, they all mysteriously have broken credit card terminals. Travis therefore founds a company called, just for the sake of realism, Uber, and goes after that opportunity in the worst way imaginable.

Here's the thing: Travis and Uber weren't wrong about the opportunity, which is why Uber took off the way it did. Uber even had a very explicit strategy of weaponising the love users had for the service to put pressure on local governments to allow the service to launch in different locales. This strategy succeeded in both the short and the long term, but in very different ways.

In the early years, Uber was the latest poster child for the "move fast and break things" Silicon Valley tech bro attitude. Sure, Parisian taxi drivers rioted and set Uber cars on fire, and Italian taxi drivers managed to get UberX (known locally as Uber Pop — don't ask) banned, but in most places, Uber triumphed, mainly because the service was genuinely so much better than the status quo: you could summon a car right to your location, and when you arrived at your destination, you just got out and strolled off, no haggling or searching for the right currency.

So much for the short term. In the longer term, all that moving fast and breaking things caught up with Travis and his company, as VCs got tired of subsidising the true cost of Uber rides, making them far less competitive with actual licensed taxis. However, in the mean time, something interesting happened: the previously somnolent local taxi industries in every city suddenly woke up to this new existential threat. They had been used to being monopolies, so they could set their own rules and control the number of entrants. Uber (and Lyft, Grab, et al) upended that cozy status quo — but after some flailing, and some bonfiring of Uber cars, they woke up to the threat, and addressed it in the best way: by going straight to the root of what customers had demonstrated they wanted.

Now, I can rock up in almost any decent-sized city in Europe, and with an app called Free Now, I can summon a car to my location, pay with a stored credit card, and hop out at my destination without worrying about currency conversion or losing a printed receipt. It sounds a lot like Uber, with a crucial distinction: the cars are locally-licensed taxis, subject to all the standard licensing checks.

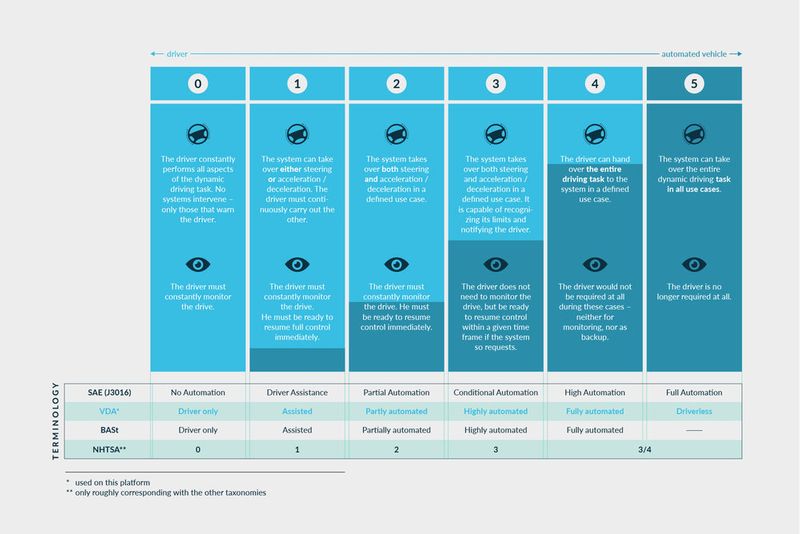

Uber is still a going concern, to be clear, but it's struggling as its costs rise and the negative externalities come home to roost. The investment case for Uber was always based on them securing either a monopoly on the ride-hailing market, or alternatively a breakthrough in self-driving technology that would let them do away with their highest cost: the pesky human element, the actual drivers.

I think it's inarguable that this original investment case has not worked out, and a lot of the shine has come off Uber as the investor subsidy goes away and prices rise to reflect actual costs.

From Four Wheels To Two

Now, the same mechanisms are playing out in the dockless scooter — aka "micromobility" — market:

Today, a scooter rental ride hardly seems like a bargain. At typical rates, which include an upfront and per-minute fee, a 20-minute ride would cost about $6. That’s more than a quick bus or subway ride in places that offer those options.

Still, last-mile transportation remains a tricky niche to fill in urban networks, and scooters do have a place in the mix. We’re not done with them yet. Just don’t expect the days—or valuations—of the peak scooter era to return any time soon.

I have used these services, and broadly speaking, I'm a fan. They are not worth bazillions of CURRENCY_UNITS because they are obviously terrible markets for the purpose: low barriers to entry, and operating costs that scale linearly with network size.

As it happens, both of these issues can be addressed with some good old-fashioned regulation — the sort of thing that happens in maturing markets. Now that the public has expressed interest in these new options, each city can choose how the services should operate. In my small hometown, a single vendor has been approved, with a cap on the number of vehicles and on speed in the centre of town (GPS-enforced, natch). Crucially, the scooters are not just abandoned wherever, getting in people's way; they live in specific "parking lots" (repurposed car parking spots). Paris has taken a similar approach, requiring riders to photograph where they left their ride to ensure it's not placed somewhere it shouldn't be, and fining or barring riders who do not park correctly.

I just hope that we can reach the same result as Uber — all of the good aspects of the service, without the horrible VC-inflated bits. I like that I can rock up in a strange city, pull out my phone, and within a minute or two be on an e-bike. It's not often practical to travel with my own bike, so these rental services have a real potential.

Moses did not get to see the Promised Land. Uber and Lime are still with us, but with rather diminished ambitions. But as long as we get to that promised land of a fully-integrated and ubiquitous transport network, the creative destruction was worth it, and we travellers will be happy.

🖼️ Photos by Austin Distel and Hello I'm Nik on Unsplash