My current gig involves making comparisons between different products. It’s mostly interesting, and helps me understand better what the use cases are for all of these products — not just how they are built, but why. That double layer of analysis is what takes most of the work, because those two aspects — the how and the why — are never, ever documented in the same place. I spend a lot of time trawling through product docs and cross-comparing them to blogs, press reports, and most annoying of all, recorded videos.

Fundamentally, most reviews or evaluations tend to focus either on how well a product delivers its promised capabilities, or on how well it serves its users’ purposes. Those are not at all the same thing! A mismatch here is how you get products that are known to be powerful, but not user-friendly.

One frequent dichotomy is between designing to serve power users versus novices. It is very very hard to serve both groups well! The handholding and signposting that people require when they are new to a product, and perhaps even to the entire domain, is liable to be perceived as clutter that gets in the way of what more experienced users already know how to achieve. The ultimate example is between graphical and command-line interfaces: the sorts of pithy incantations that can be condensed into a dozen keystrokes at a shell prompt might take whole minutes of clicking, dragging, and selecting from menus in the GUI. On the other hand, if you don’t know those commands already, the windows, menus, and breadcrumbs, perhaps combined with judicious use of contextual help, give a much more discoverable route to the functionality.

Ideally, what you want to do is serve both user populations equally well: surprise and delight new users at first contact, but keep on making them happy and productive once they’re on board.

Two Tribes Go To War: Dev and Ops

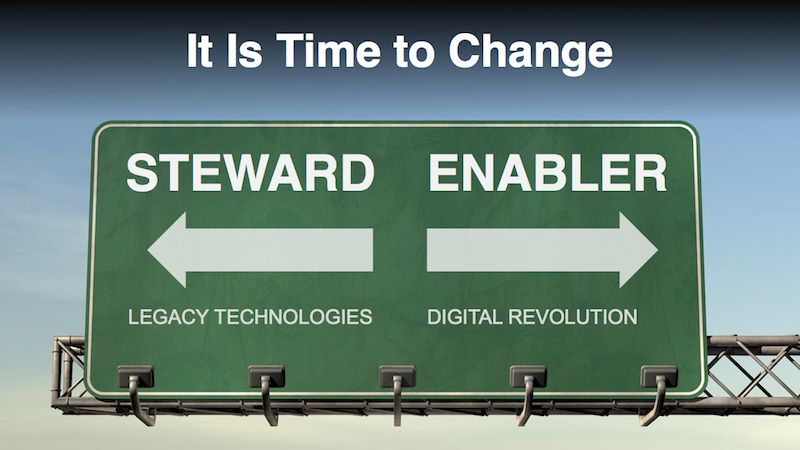

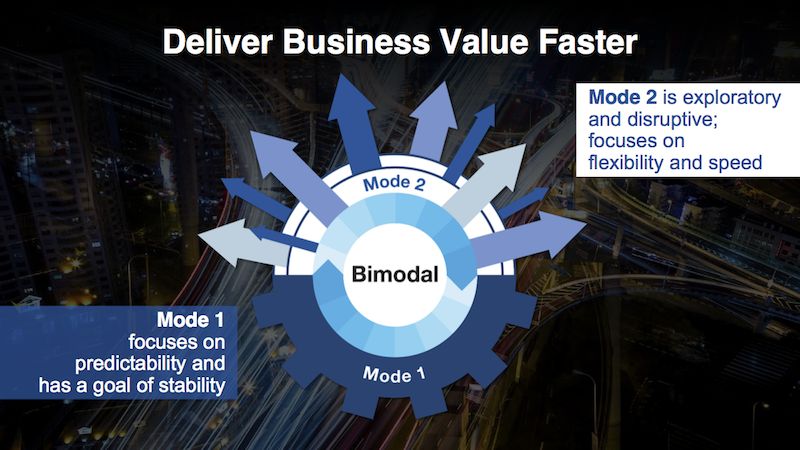

There are two forces that are always tugging IT in different directions: stability, maintainability, and solid foundations (historically, this has been called Ops), versus ease of building and agility (this one is Dev). Business needs both, which is why Gartner came up with the idea of "bimodal IT" to describe the difference between these two approaches.

For more on this topic, listen to Episode Ten of Roll For Enterprise, digging into how come "legacy" became a dirty word in IT.

The problem, and the reason for a lot of the pushback on the mere idea of bimodal IT, is that most measurement frameworks only evaluate against one of the two axes. This is how we get to the failure modes of bimodal: the cool kids playing with new tech, and boring, uncool legacy tech in a forgotten corner.

Technologies can be successful while concentrating only on stability or only on agility, depending on target market; think of how successful Windows was in the 95-98-ME era despite tragic levels of instability, or for a more positive example, look at something like QNX quietly running nuclear reactors. However, in most cases it’s best to deliver at least some modicum of both stability and agility.

It’s About Time

Another factor to bear in mind is the different timescales that are in play. The lifetime of services can be short, in which case it’s okay to iterate rapidly & obsolesce equally rapidly — or more pithily, "move fast and break things", without slowing down to update the docs. On the other hand, the lifecycle of users can be long, if you treat them right at first contact. This is why it’s so important to strike a balance between onboarding new users quickly and servicing existing ones. If you turn off new users to please existing power users, you’ll regret it quickly; by definition, there are more potential users out there than existing ones. The precise balance point to aim for depends on what percentage of the total addressable user base already adopted your service, but there will always be new or more casual users who can benefit from helpful features, as long as they don’t get in the way of people who already know what they’re doing.

There’s no easy way to resolve the tension between agility and stability, speed of development and ease of maintainability, or power-user features and helpful onboarding. All I can suggest is to try to keep both in mind, because I have seen entire products sunk by an excessive focus on one or the other. If you do decide to focus your efforts on one side of the balance, that may still be fine — just as long as you’re conscious of what you’re giving up.

🖼️ Photos by MILKOVÍ and Aron Visuals on Unsplash